Difference between revisions of "Oja's rule"

From Eyewire

m (Adds category) |

|||

| (2 intermediate revisions by one other user not shown) | |||

| Line 1: | Line 1: | ||

| − | '''Oja's rule''', developed by Finnish computer scientist Erkki Oja in 1982, is a stable version of [[Hebb's rule]].<ref name="Oja82"> | + | <translate> |

| + | |||

| + | '''Oja's rule''', developed by Finnish computer scientist Erkki Oja in 1982, is a stable version of [[Hebb's rule]].<ref name="Oja82">Oja, Erkki (November 1982). [http://www.springerlink.com/content/u9u6120r003825u1 "Simplified neuron model as a principal component analyzer"]. <em>Journal of Mathematical Biology</em> <strong>15</strong> (3): 267–273</ref> | ||

==Model== | ==Model== | ||

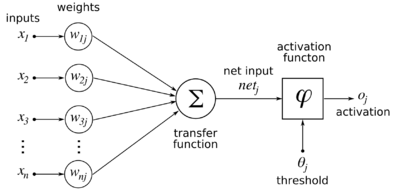

| − | [[File:ArtificialNeuronModel english.png|thumb|right|400px|Model of a neuron. < | + | [[File:ArtificialNeuronModel english.png|thumb|right|400px|Model of a neuron. <em>j</em> is the index of the neuron when there is more than one neuron. For a linear neuron, the activation function is not present (or simply the identity function).]] |

| − | As with Hebb's rule, we use a linear neuron. Given a set of k-dimensional inputs represented as a column vector | + | As with Hebb's rule, we use a linear neuron. Given a set of k-dimensional inputs represented as a column vector [[File:Hebb1.png]], and a linear neuron with (initially random) [[Synapse|synaptic]] weights from the inputs [[File:Hebb2.png]] the output the neuron is defined as follows: |

| − | + | [[File:Hebb3.png|center]] | |

Oja's rule gives the update rule which is applied after an input pattern is presented: | Oja's rule gives the update rule which is applied after an input pattern is presented: | ||

| − | + | [[File:Oja1.png|center]] | |

| − | Oja's rule is simply Hebb's rule with weight normalization, approximated by a Taylor series with terms of | + | Oja's rule is simply Hebb's rule with weight normalization, approximated by a Taylor series with terms of [[File:Oja2.png]] ignored for n>1 since η is small. |

It can be shown that Oja's rule extracts the first principal component of the data set. If there are many Oja's rule neurons, then all will converge to the same principal component, which is not useful. [[Sanger's rule]] was formulated to get around this issue. | It can be shown that Oja's rule extracts the first principal component of the data set. If there are many Oja's rule neurons, then all will converge to the same principal component, which is not useful. [[Sanger's rule]] was formulated to get around this issue. | ||

| Line 21: | Line 23: | ||

[[Category: Neural computational models]] | [[Category: Neural computational models]] | ||

| + | |||

| + | </translate> | ||

Latest revision as of 03:23, 24 June 2016

Oja's rule, developed by Finnish computer scientist Erkki Oja in 1982, is a stable version of Hebb's rule.[1]

Model

As with Hebb's rule, we use a linear neuron. Given a set of k-dimensional inputs represented as a column vector

Error creating thumbnail: Unable to save thumbnail to destination

, and a linear neuron with (initially random) synaptic weights from the inputs Error creating thumbnail: Unable to save thumbnail to destination

the output the neuron is defined as follows:

Error creating thumbnail: Unable to save thumbnail to destination

Oja's rule gives the update rule which is applied after an input pattern is presented:

Error creating thumbnail: Unable to save thumbnail to destination

Error creating thumbnail: Unable to save thumbnail to destination

ignored for n>1 since η is small.

It can be shown that Oja's rule extracts the first principal component of the data set. If there are many Oja's rule neurons, then all will converge to the same principal component, which is not useful. Sanger's rule was formulated to get around this issue.

References

- ↑ Oja, Erkki (November 1982). "Simplified neuron model as a principal component analyzer". Journal of Mathematical Biology 15 (3): 267–273