Difference between revisions of "Feedforward backpropagation"

(Created page with "'''Feedforward backpropagation''' is an error-driven learning technique popularized in 1986 by David Rumelhart (1942-2011), an American psychologist, Geoffrey Hinton (1947-), ...") |

|||

| Line 1: | Line 1: | ||

| − | '''Feedforward backpropagation''' is an error-driven learning technique popularized in 1986 by David Rumelhart (1942-2011), an American psychologist, Geoffrey Hinton (1947-), a British informatician, and Ronald Williams, an American professor of computer science.<ref name=Rumelhart1986>{{cite journal|last=Rumelhart|first=David E.|coauthors=Hinton, Geoffrey E., Williams, Ronald J.|title=Learning representations by back-propagating errors|journal=Nature|date=8 October 1986|volume=323|issue=6088|pages=533–536|doi=10.1038/323533a0|url=http://www.cs.toronto.edu/~hinton/absps/naturebp.pdf}}</ref> | + | '''Feedforward backpropagation''' is an error-driven learning technique popularized in 1986 by David Rumelhart (1942-2011), an American psychologist, Geoffrey Hinton (1947-), a British informatician, and Ronald Williams, an American professor of computer science.<ref name=Rumelhart1986>{{cite journal|last=Rumelhart|first=David E.|coauthors=Hinton, Geoffrey E., Williams, Ronald J.|title=Learning representations by back-propagating errors|journal=Nature|date=8 October 1986|volume=323|issue=6088|pages=533–536|doi=10.1038/323533a0|url=http://www.cs.toronto.edu/~hinton/absps/naturebp.pdf}}</ref> It is a ''supervised'' learning technique, meaning that the desired outputs are known beforehand, and the task of the network is to learn to generate the desired outputs from the inputs. |

== Model == | == Model == | ||

| Line 37: | Line 37: | ||

\end{align}</math></center> | \end{align}</math></center> | ||

| + | If the desired outputs for a given input vector are <math>t_j, j \in \left \{ 1, 2, \cdots, N_O \right \}</math>, then the update rules for the weights are as follows: | ||

| + | <center><math>\begin{align} | ||

| + | \delta_{Oj} &= y_{Oj} \left ( 1-y_{Oj} \right ) \left ( t_j-y_{Oj} \right )\\ | ||

| + | \Delta w_{Oij} &= \eta y_{Hi} \delta_{Oj}\\ | ||

| + | \delta_{Hj} &= y_{Hj} \left ( 1-y_{Hj} \right ) \sum_{k=1}^{N_O} \delta_{Ok} w_{Hjk}\\ | ||

| + | \Delta w_{Hij} &= \eta x_i \delta_{Hj} | ||

| + | \end{align}</math></center> | ||

| + | |||

| + | where <math>\delta_{Oj}</math> is an error term for output neuron <math>j</math> and <math>\delta_{Hj}</math> is a ''backpropagated'' error term for hidden neuron <math>j</math>. | ||

== References == | == References == | ||

<references/> | <references/> | ||

Revision as of 16:10, 12 April 2012

Feedforward backpropagation is an error-driven learning technique popularized in 1986 by David Rumelhart (1942-2011), an American psychologist, Geoffrey Hinton (1947-), a British informatician, and Ronald Williams, an American professor of computer science.[1] It is a supervised learning technique, meaning that the desired outputs are known beforehand, and the task of the network is to learn to generate the desired outputs from the inputs.

Model

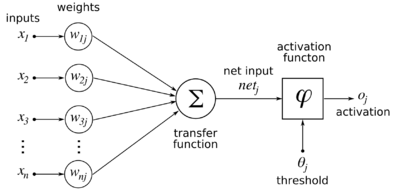

Given a set of k-dimensional inputs represented as a column vector:

and a nonlinear neuron with (initially random, uniformly distributed between -1 and 1) synaptic weights from the inputs:

then the output of the neuron is defined as follows:

where <math>\varphi \left ( \cdot \right )</math> is a sigmoidal function. We will assume that the sigmoidal function is the simple logistic function:

This function has the useful property that

Feedforward backpropagation is typically applied to multiple layers of neurons, where the inputs are called the input layer, the layer of neurons taking the inputs is called the hidden layer, and the next layer of neurons taking their inputs from the outputs of the hidden layer is called the output layer. There is no direct connectivity between the output layer and the input layer.

If there are <math>N_I</math> inputs, <math>N_H</math> hidden neurons, and <math>N_O</math> output neurons, and the weights from inputs to hidden neurons are <math>w_{Hij}</math> (<math>i</math> being the input index and <math>j</math> being the hidden neuron index), and the weights from hidden neurons to output neurons are <math>w_{Oij}</math> (<math>i</math> being the hidden neuron index and <math>j</math> being the output neuron index), then the equations for the network are as follows:

y_{Hj} &= \varphi \left ( \sum_{i=1}^{N_I} w_{Hij} x_i \right ), j \in \left \{ 1, 2, \cdots, N_H \right \} \\ y_{Oj} &= \varphi \left ( \sum_{i=1}^{N_H} w_{Oij} y_{Hi} \right ), j \in \left \{ 1, 2, \cdots, N_O \right \} \\

\end{align}</math>If the desired outputs for a given input vector are <math>t_j, j \in \left \{ 1, 2, \cdots, N_O \right \}</math>, then the update rules for the weights are as follows:

\delta_{Oj} &= y_{Oj} \left ( 1-y_{Oj} \right ) \left ( t_j-y_{Oj} \right )\\ \Delta w_{Oij} &= \eta y_{Hi} \delta_{Oj}\\ \delta_{Hj} &= y_{Hj} \left ( 1-y_{Hj} \right ) \sum_{k=1}^{N_O} \delta_{Ok} w_{Hjk}\\ \Delta w_{Hij} &= \eta x_i \delta_{Hj}

\end{align}</math>where <math>\delta_{Oj}</math> is an error term for output neuron <math>j</math> and <math>\delta_{Hj}</math> is a backpropagated error term for hidden neuron <math>j</math>.

References

- ↑ Script error: No such module "Citation/CS1".